What’s New at EdgeTier: Q1 2026 Product Updates

Every quarter, our team ships features designed to make your day-to-day work a little smoother, a little faster, and a

AI quality assurance in a contact centre is the use of artificial intelligence to automatically evaluate every customer interaction against a defined set of quality criteria, covering tone, compliance, resolution, empathy, and more, without relying on manual sampling. Where traditional QA reviews 2–5% of conversations, AI QA reviews 100% of them, in real time. What…

AI quality assurance in a contact centre is the use of artificial intelligence to automatically evaluate every customer interaction against a defined set of quality criteria, covering tone, compliance, resolution, empathy, and more, without relying on manual sampling. Where traditional QA reviews 2–5% of conversations, AI QA reviews 100% of them, in real time.

Quality assurance in a contact centre is the process of reviewing customer interactions to make sure agents are delivering the right experience: following correct procedures, communicating clearly, resolving issues effectively, and representing the brand well.

In most contact centres, QA has historically been a very manual process. A small team of analysts listens to a batch of calls or reads through a selection of chat transcripts, scores them against a scorecard, and feeds the results back to team leaders. It is time-consuming, expensive, and (critically) it only ever covers a fraction of what is actually happening.

AI quality assurance changes the mechanics of this entirely. Instead of a human analyst reviewing a handful of conversations, AI models analyse every single interaction across every channel: voice, chat, email, and messaging. Each conversation is automatically scored, tagged, and flagged where relevant, giving QA teams and team leaders a complete, consistent picture of quality across the entire operation.

The result is faster QA and a fundamentally different level of visibility into how your contact centre is actually performing.

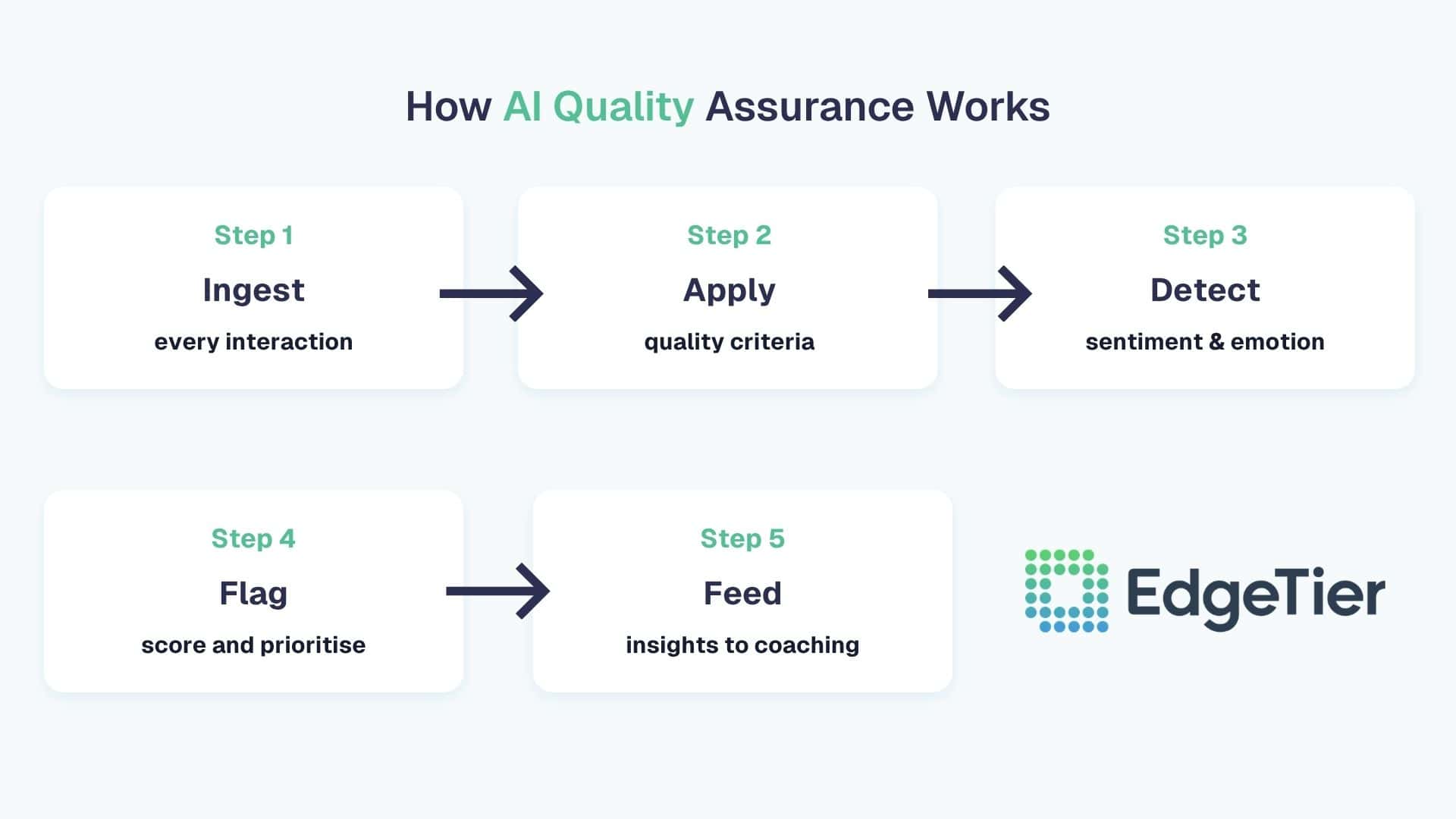

AI QA is not a single technology but a set of capabilities working together. Here is how the process works in practice, from data ingestion to agent coaching.

The first step is connecting the AI system to your contact centre’s interaction data such as calls, chats, emails, and any other channels you operate. Most AI QA platforms integrate directly with your existing contact centre software, CRM, or telephony stack, pulling in interaction data in real time or at regular intervals.

Voice interactions are transcribed automatically. Text-based interactions are ingested as-is. The result is a structured, searchable dataset of every conversation your team has had.

Once interactions are ingested, the AI evaluates each one against a customised quality framework that reflects your organisation’s standards. This might include:

These criteria are defined by your QA team and configured in the platform. The AI applies them consistently across every conversation, eliminating the scoring variability that comes with different human analysts applying the same rubric differently.

Beyond structured criteria, AI QA systems analyse the emotional dynamics of a conversation. Sentiment analysis identifies where customer frustration is building, where an agent’s response de-escalated or inadvertently escalated a situation, and where empathy was or was not present.

This matters because some of the most important quality signals are not captured by a checklist. An agent might tick every box on a scorecard while still delivering a cold, robotic experience. Sentiment analysis surfaces those gaps.

Every interaction receives a quality score. Interactions that fall below a threshold, or that contain specific flags (a compliance risk, an unusually high frustration level, a missed resolution opportunity, etc.), are surfaced for human review.

This changes how QA analysts spend their time. Rather than randomly sampling conversations and hoping they find something useful, analysts are directed to the interactions most likely to contain a genuine quality issue or coaching opportunity. The random sample becomes a targeted review queue.

The final step is closing the loop. AI QA data flows into coaching workflows, giving team leaders a clear, evidence-based picture of where each agent needs support. Rather than generic feedback, coaching becomes specific: an agent who consistently struggles with frustrated customers gets targeted training on de-escalation; an agent missing compliance steps gets flagged before it becomes a regulatory issue.

EdgeTier Coach is built around exactly this workflow, connecting QA data directly to agent coaching, so that insights translate into improvement rather than sitting in a report.

Most contact centres still operate QA on a sample of 2–5% of interactions. The maths are straightforward: if your team handles 10,000 conversations a week and your QA team reviews 300 of them, you have a 97% blind spot.

Statistics from AmplifAI found that only 25% of contact centres have fully integrated AI automation into their daily workflows, which means the majority are still relying on processes that were designed for a much smaller, simpler operating environment.

The problem with low sampling rates is not just the volume of what gets missed. It is that the sample is rarely representative. QA teams tend to select interactions based on what is easiest to review, what has already been escalated, or what falls within a scheduled review window. The systemic issues like the training gap that affects 40% of an agent’s conversations, the compliance risk that only shows up in calls from a particular queue, stay hidden.

U.S. companies lose an estimated $75 billion annually due to poor customer service, a figure that has not moved even as AI investment has accelerated. The gap is not in tools. It is in integration, and QA is one of the clearest places where that gap shows up.

Electric Ireland deployed EdgeTier’s AI-powered QA and analytics capabilities across their contact centre, giving team leaders full visibility into interaction quality for the first time. Coaching conversations became targeted and evidence-based rather than relying on a manager’s instinct or a randomly selected call.

The result was a 21% improvement in CSAT scores. The improvement was not driven by adding headcount or overhauling processes. It came from understanding, at scale, where quality was falling short and fixing it.

A European health and wellness retailer with over 100 agents had a QA process that was entirely manual; analysts juggling disconnected systems, with a single evaluation taking over half an hour. Insights only emerged at the end of the monthly reporting cycle.

After implementing EdgeTier, the team moved from two QA reviews per hour to four or five. CSAT scores rose by 16 points. Reporting that previously took days could be produced in minutes.

One concern that comes up regularly when AI QA is introduced is how agents will respond to being evaluated on 100% of their interactions rather than a small sample. In practice, the experience tends to be more positive than expected, for a straightforward reason.

Random sampling feels arbitrary to agents. Being scored on a handful of conversations chosen at random, then having that score used to inform a development conversation, can feel unfair. AI QA replaces that arbitrariness with consistency. Every agent is evaluated on the same criteria, applied in the same way, across their full body of work. Most agents find this fairer than the alternative.

In 2026, traditional contact centre quality management practices (manual call sampling, subjective scoring, siloed systems, and delayed feedback loops) are no longer considered sustainable as interaction volumes grow. The shift toward AI QA is as much about improving the agent experience as it is about protecting the customer experience.

The case for AI quality assurance is not complicated. Reviewing 2–5% of interactions and calling it quality assurance has always been a compromise. The only reason it persisted was that reviewing more was not feasible at scale. AI makes full coverage feasible, consistent, and affordable, connecting the insights it surfaces directly to the coaching and development workflows that actually improve performance.

The contact centres seeing the strongest results from AI QA are not treating it as a reporting tool. They are treating it as the foundation of a continuous improvement loop: surface quality issues at scale, direct human attention to where it matters most, and track the improvement over time.

Frequently Asked Questions:

AI QA reviews 100% of interactions across all channels. This is the core difference from traditional QA, which typically samples 2–5% of conversations. Full coverage means no interaction goes unreviewed, and no systemic quality issue goes undetected.

Speech analytics is one component of AI QA. It refers specifically to the analysis of voice interactions: transcription, keyword detection, sentiment analysis on calls. AI QA is a broader capability that applies quality evaluation across all channels, not just voice, and connects the output to scoring, coaching, and compliance workflows.

No, and that is not the goal. AI QA changes what QA analysts spend their time on. Instead of manually listening to randomly selected calls, analysts focus on the interactions the AI has already identified as high-priority: the flagged conversations, the coaching opportunities, the compliance risks. Most organisations using AI QA report that their analysts are more effective and more engaged, rather than redundant.

Quality criteria are defined by your QA team and configured in the platform. They typically mirror your existing scorecard or quality framework, translated into a format the AI can evaluate consistently. Most platforms allow you to weight criteria differently, set thresholds for flags, and refine the framework over time based on what the data surfaces.

The connection between QA data and coaching is one of the most important design decisions in any AI QA implementation. The most effective setups route QA insights directly into coaching workflows: team leaders receive a prioritised list of conversations to review, linked to specific quality flags, and can conduct targeted coaching conversations with clear evidence in hand. EdgeTier Coach is built to support exactly this kind of integrated coaching loop.

If you want to understand what is really happening in your contact centre, across every interaction, every agent, every channel: EdgeTier Coach is a good place to start.

For a broader view of what is driving contact volume and customer sentiment, EdgeTier Explore surfaces those insights across 100% of your interactions in real time.

And if you want to catch quality-affecting issues like delivery problems, product bugs, and policy confusion before they compound, EdgeTier Sonar provides real-time anomaly detection built specifically for contact centres.

Every quarter, our team ships features designed to make your day-to-day work a little smoother, a little faster, and a

The fastest way to reduce contact volume is to fix the problems that are generating contacts in the first place.

Contact centre AI analytics is the use of artificial intelligence to automatically analyse 100% of customer interactions, across chat, email,

"It has reduced the time for the quality assurance process as it provides clear data and a very robust direction on where to look and what matters the most."

"We now have highly detailed understanding of agent performance, not just on key agent metrics, but also on how customers react to our agents and the emotions of our customers feel when talking to our team."

"We’re a big business, so getting the right people to agree and fix something hasn’t always been easy. Now we’ve got one version of the truth—it’s much easier to align and act"

Let us help your company go from reactive to proactive customer support.

Unlock AI Insights